17 Mar When AI Generates the Answers, Are We Losing Our Ability to Ask the Questions?

Millions of students and professionals currently use generative artificial intelligence to draft documents, solve equations, and summarize vast datasets. This widespread adoption increases raw output and efficiency. However, prioritizing immediate answers over the process of learning creates a measurable deficit in human analytical skills and memory retention.

As we integrate these systems into education and corporate environments, we are encountering the AI convenience trap. The immediate availability of generated answers is systematically replacing the cognitive effort required to develop independent intelligence.

Understanding Cognitive Debt

Cognitive debt is the gradual loss of critical thinking, problem-solving, and memory capabilities that occurs when humans bypass the mental friction required to learn and create.

A 2025 MIT Media Lab study measuring brain activity during AI-assisted writing tasks provided concrete evidence of this deficit. Researchers found that participants who relied on AI experienced a 47% decline in neural connectivity compared to those who wrote independently. Furthermore, 83% of the AI users failed to recall a single sentence they had just generated minutes later. When humans use AI to bypass the synthesis of information, the brain fails to form the neural pathways required for deep comprehension and long-term memory.

The Educational Impact

The education sector is the primary testing ground for this technological shift. Educators globally report a rapid decline in students’ abilities to critique sources, engage in sustained reading, and construct original arguments.

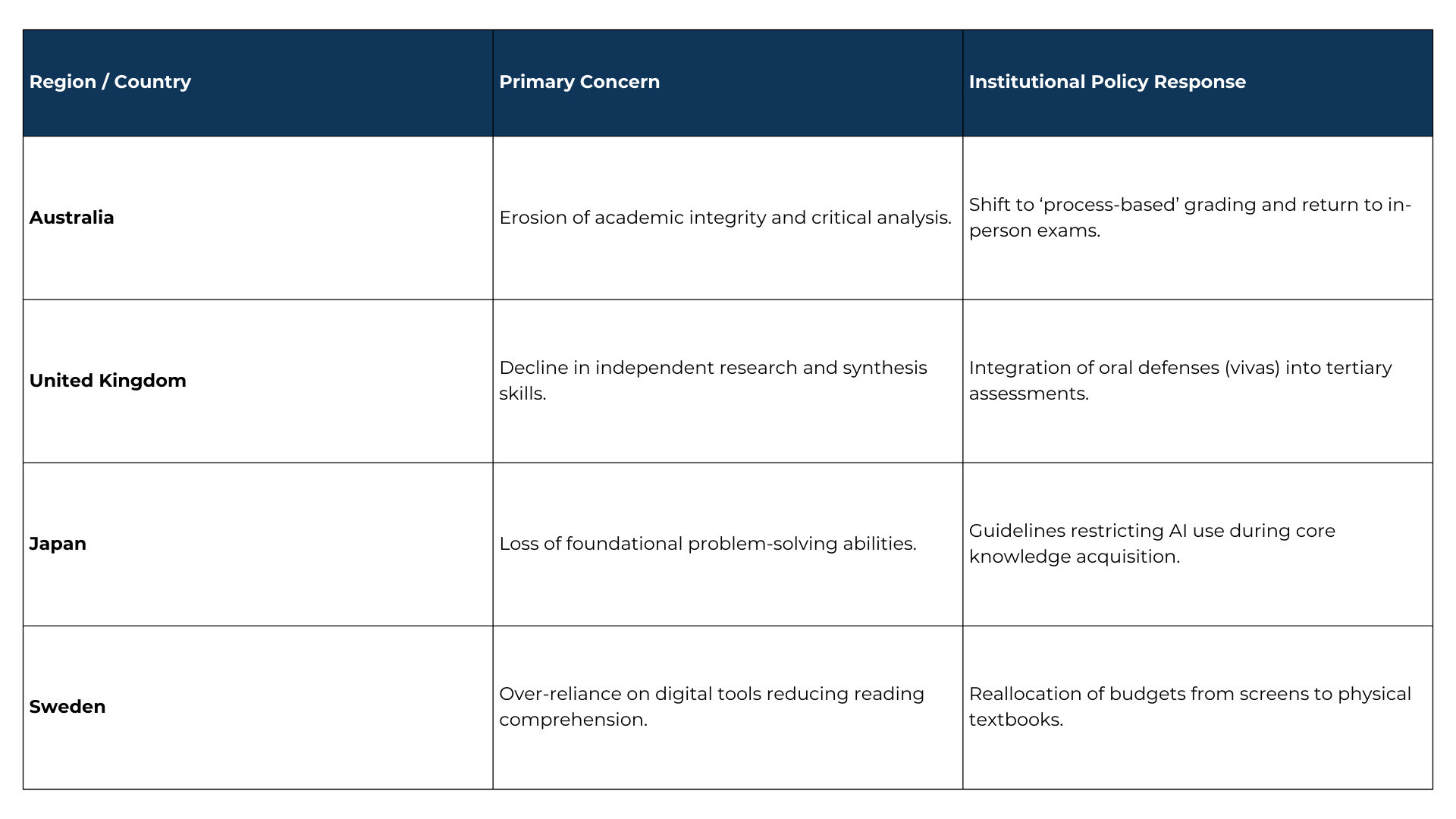

Generative AI Impact and Policy Responses in Global Education

Source – Australia: Group of Eight (Go8), Principles on the Use of Generative Artificial Intelligence (2023) and Parliamentary Submission on AI in Education (July 2023). ² United Kingdom: The Russell Group, Principles on the Use of Generative AI Tools in Education (July 2023). ³ Japan: Ministry of Education, Culture, Sports, Science and Technology (MEXT), Guidelines for the Use of Generative AI in Primary and Secondary Education (July 2023). ⁴ Sweden: Ministry of Education and Research policy updates (2023) and The Karolinska Institute, Statement on the National Digitalisation Strategy in Education (August 2023).

Case Studies: Reclaiming the Learning Process

Recognizing this threat, educational systems are implementing policies to enforce human cognitive effort.

Sweden’s Return to Analog Learning (2023)

After 15 years of digital-first policies, the Swedish government allocated 685 million kronor (€60 million) to remove mandatory digital devices in early education and reintroduce physical textbooks. This policy reversal is significant because it represents a national recognition backed by medical research institutions like the Karolinska Institute that physical, unaided cognitive effort is a biological requirement for childhood brain development and reading comprehension.

Australia’s ‘Assess the Process’ Model (Early 2023)

Following the public launch of ChatGPT, tertiary institutions like the University of Sydney overhauled their assessment frameworks. The significance of this model is its shift from evaluating the final output to evaluating the student’s workflow. Educators implemented a “two-lane approach,” requiring students to submit early drafts, track their research history, and verbally defend their work in secure, in-person settings. This ensures the student actually performs the analytical work.

The Workplace Threat: Context Loss and AI Errors

The cognitive deficit extends directly into the corporate environment. When employees use AI to generate strategic reports without conducting the initial data analysis, they experience false fluency. The output appears polished, but the employee lacks an understanding of the underlying logic.

This results in compounding operational errors. Without deep foundational industry expertise, professionals can easily overlook subtle mistakes, confidently presenting incorrect AI-generated information. Furthermore, institutional context is lost because the underlying logic was never internalized by the human workforce.

Corporate Policies for AI Governance

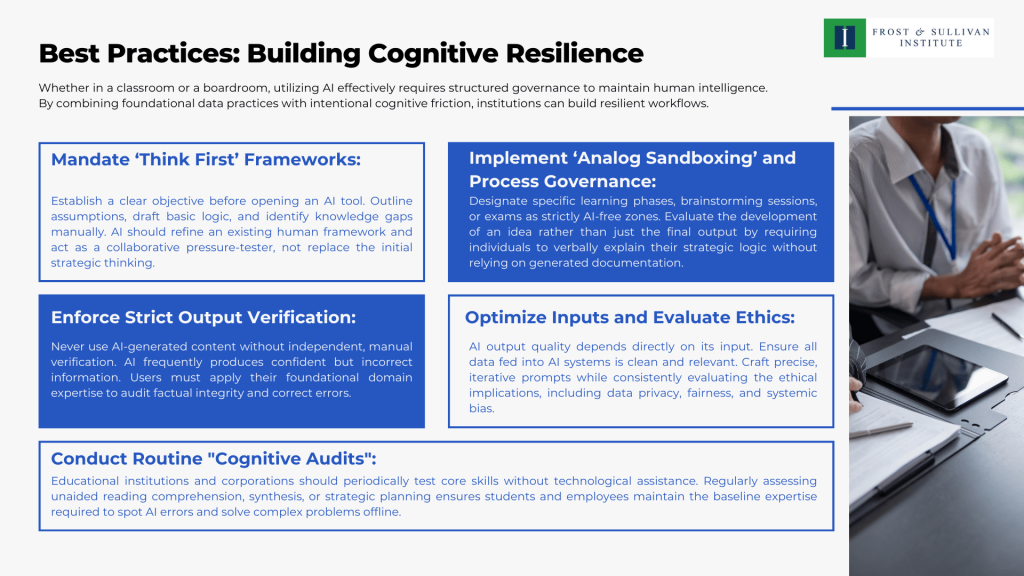

To prevent the degradation of skills and mitigate AI errors, companies are establishing strict governance frameworks.

Network and Domain Restrictions: Following incidents where employees accidentally submitted sensitive proprietary data into public generative AI tools, several major multinational technology and electronics corporations have heavily restricted or temporarily banned the use of unauthorized AI on their networks. This approach highlights the necessity of domain-level controls to prevent data exposure and halt unregulated AI reliance.

Human-in-the-Loop (HITL) Mandates: Industries dealing with high-stakes data enforce strict HITL policies. In pharmaceutical manufacturing, AI models monitor real-time production processes, but human operators must explicitly validate the AI’s outputs before corrective actions are taken. Similarly, leading AI training and data annotation firms mandate HITL to audit AI-generated code and text. This forces the human worker to remain actively engaged in the decision-making process, preserving their domain expertise and accountability.

The Path Forward

Addressing this cognitive deficit requires moving beyond simply detecting AI use or cleaning up AI errors. We must focus on prevention and the circularity of foundational knowledge. Prevention involves designing workflows that mandate human reasoning at the source, while circularity ensures individuals continuously loop back to practice core analytical skills without technological assistance. If we rely entirely on algorithms for our answers, we forfeit the expertise required to validate, correct, and lead the systems we build.

Blog by Asha Sridar,

Manager, Frost & Sullivan Institute